Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Related Rants

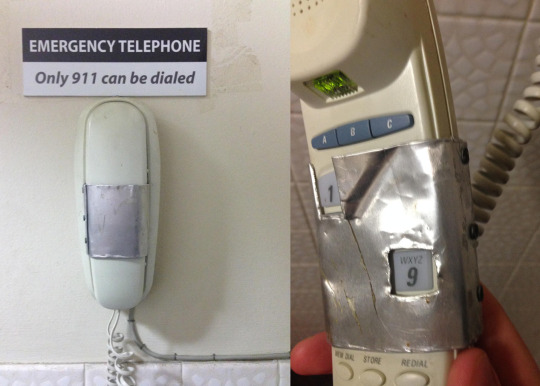

What only relying on JavaScript for HTML form input validation looks like

What only relying on JavaScript for HTML form input validation looks like Found something true as 1 == 1

Found something true as 1 == 1

For learning purposes, I made a minimal TensorFlow.js re-implementation of Karpathy’s minGPT (Generative Pre-trained Transformer). One nice side effect of having the 300-lines-of-code model in a .ts file is that you can train it on a GPU in the browser.

https://github.com/trekhleb/...

The Python and Pytorch version still seems much more elegant and easy to read though...

devrant

gpt

ml

transformenrs

js

ai